(Prior Planning Prevents Particularly Poor Performance)

When performing any finite element analysis project it is critical to plan your approach to ensure a success. The most important step is to have clear goals, including your expected results and the format in which these results will be presented. It is also critical to have a reasonable idea of what answers you expect to obtain. For example, one can perform a simple Stress = Force / Area calculation to estimate the anticipated stress value prior to performing any finite element analysis calculations. Having this data will reduce the chance of large scale errors, such as incorrect units. Other areas of planning your finite element analysis include: model and mesh size, material properties, element selection, connection types, and quantity and quality of result sets. Here is a brief checklist that provides a starting point which can be tailored to your individual analysis goals:

● Can symmetry and/or 2-d analyses be used? It is always better to solve the simplest model possible first. Additional complexity should be added incrementally. More accurate results can often be achieved by creating an independent local refined model and mapping the results via submodeling.

● Set a maximum degree of freedom value based on computational resources. For structural static analyses, as a rule of thumb, for each 100,000 degrees of freedom, a gigabyte of RAM is needed. For example, if you are using 3d shell elements that contain six degrees of freedom per node, a model of 200,000 nodes would require approximately 12 GB of memory.

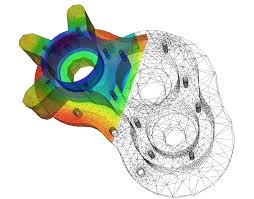

● Anticipate the total element count through initial coarse meshing of a subset of the model. It is always more efficient to start simple with a coarse mesh and then refine the mesh in high stress areas. Creating a mapped mesh of lower order 3d brick elements with enhanced strain (extra shape functions) can reduce the solution time significantly and solve complex bending problems very accurately.

● Are large deflection effects required? Typically these are required when a component deforms more than half its thickness. If there is any doubt, turn them on. They can’t hurt. If the effects are insignificant, convergence will occur in the second iteration.

● Do I need to include nonlinear materials? If the design criteria does not allow material yield, there is no reason to include this effect in the model. If it is required, it is still helpful to run the linear analysis first for model checkout. It is generally recommended to isolate the regions where yielding will occur and refine the mesh to accurately predict the accumulated plastic strain.

● Do I need to include nonlinear contact? Running bonded contact first can help debug modeling issues. If the interface is in complete compression, the nonlinear contact might not be needed. Keeping some interfaces bonded can help assure that rigid body motion is prevented when nonlinear contact is active, and improve convergence speed.

● If the analysis requires multiple solutions, anticipate the quantity of results that will be produced by solving a single solution. For large models with lots of steps to be solved, it is often necessary to reduce the output by saving data at different intervals. For example, running a time history with 1500 design points, where each point requires 1Gb storage for results, would require 1.5 terabytes of disk space. If one stored the displacements at every step, but stresses only in the critical areas, and only every 3rd step, the same solution might produce a results files of less than a 100 GB.

● For modal analyses used to calculate the first few natural frequencies, the mesh can usually be coarse if only natural frequencies are required. These are proportional to the square root of the stiffness over the mass, and therefore relatively insensitive to mesh density. Be careful not to miss frequencies when using symmetry boundary conditions that can only predict symmetric modes. Also, be sure to define the mass density correctly and be aware that nonlinearities are not accounted for.

This is a short list of some guidelines for analysis planning and may need to be enhanced depending upon your application. The key to planning your finite element analysis is to start with the very simple analysis and slowly introduce complexity. Those that create the simple example problem that others “don’t have time to perform” will always reap the rewards of more accurate, on- time and under-budget finite element analysis projects!

High Performance Computing for FEA and CFD Analysis

A common question our FEA and CFD analysis customers ask us is “What hardware should I buy?” Purchasing a new high-performance computing (HPC) capable machine can be an intimidating process and lead to paralysis by three letter acronym. CPU, GPU, SAS, SSD, AVX – who came up with these names? The good news is that choosing the right HPC hardware for FEA and CFD analysis is mostly influenced by three factors: CPU, RAM, and hard drive. While there are additional bells and whistles we can use to fine tune our system, these three will have the greatest impact. Let’s take a closer look:

CPU – The number cruncher. The CPU will have a direct effect on how fast the required mathematical operations can be performed. There are two primary factors to consider when selecting a CPU – clock rate and number of cores. Clock rate, measured in Gigahertz (GHz), refers to the operating frequency of the CPU and determines the ultimate rate at which operations can be executed on each core. The number of cores, meanwhile, allows for parallelism of these operations. It’s common for a single CPU to have 8 or more cores that allow certain mathematical calculations to be performed simultaneously, greatly speeding up our analyses. It should be noted that your FEA or CFD code may require additional licenses in order to access parallelized computing, so take this into account when considering the cost of your new HPC system.

RAM – The CPU’s assistant. RAM acts as fast, temporary storage for the operating system and the applications it runs. If the amount of RAM on your system exceeds the size of the files created by the equation solvers during run time, then these files can be stored entirely in memory if your software supports this option. On the other hand, if the equation solver files are larger than the amount of RAM, they will have to be written to the hard drive. Reading and writing data to RAM can be one to two orders of magnitude faster than reading and writing data to the hard drive. Ensuring your system has sufficient memory to handle the typical analysis you run is key to efficiently performing FEA and CFD analyses.

Hard Drive – The CPU’s filing cabinet. The hard drive provides permanent storage of results as well as additional scratch space the equation solvers may need for large analyses. For analyses in which results get written frequently (i.e. nonlinear finite element analyses and transient CFD analyses), the hard drive can play a very important role in determining the solution speed. Since hard drive capacity is relatively inexpensive, the more important concern when buying a new system is I/O speed. RAID configurations (Redundant Array of Independent Disks) allow for multiple drives to be connected together, appearing as a single drive to the user and allowing for I/O to be performed in parallel. A dedicated RAID 0 array of four or more disks (SAS or SSD) and an independent RAID controller are ideal for a new system.

When building a new system, take into account the characteristics of not only the analyses you currently run but also what you plan to run in the near future. How much memory do they require? How often do they write to the hard drive? Ultimately you want to build a well balanced machine that is suitable for your analysis purposes. Having 128 GB of RAM and a RAID 0 array won’t help too much if your CPU is from the 1980′s. Having the appropriate hardware is key to getting the most out of your software!